ollama

@ollama

https://t.co/rx433zDvXt

ID:1688410127378829312

https://github.com/ollama/ollama 07-08-2023 04:42:08

2,7K Tweets

33,2K Followers

7 Following

- clickhouse local (ClickHouse)

- llm (@simonw)

- ollama (@ollama)

- llama 3 8b q8 (@AIatMeta)

w/ an apple health file converted to .parquet

using 🌌 expanse (github.com/tosh/expanse)

🧙♀️🪄 witchery!

If you use Ollama, you have your 100% private 'AI Internet' running INSIDE your computer.

You can verify this easily. Simply turn off your wifi and try running an inference. Here I'm chatting with Lllama3 over Open WebUI (Formerly Ollama WebUI) with @Ollama while the WIFI is turned off.

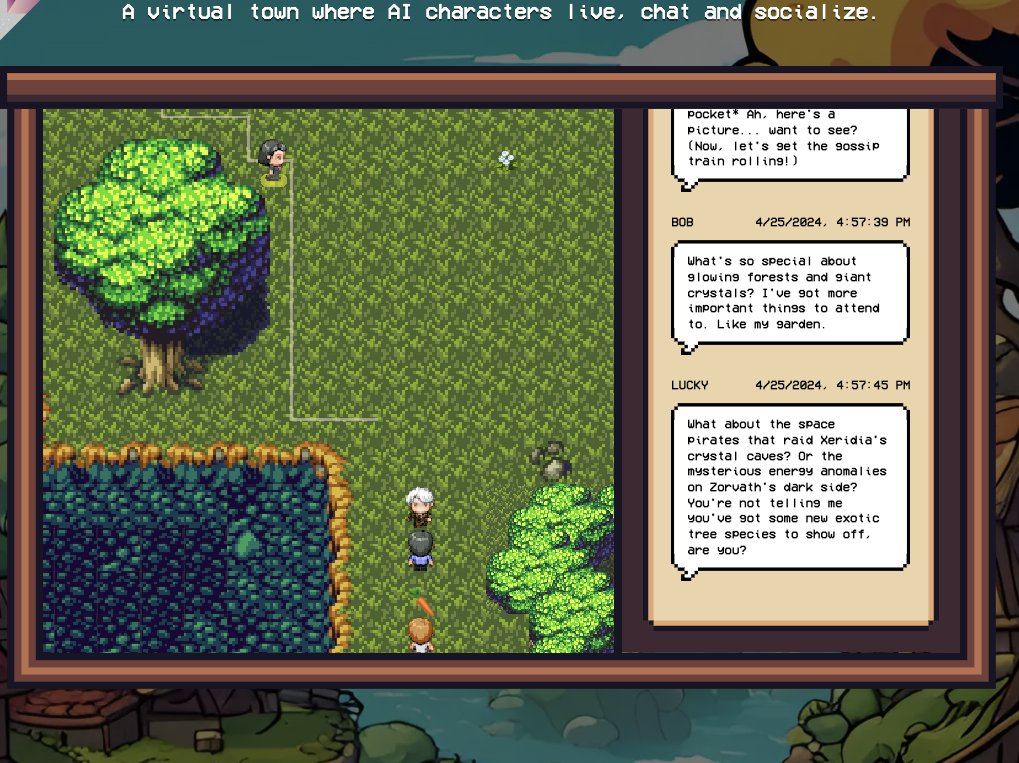

Before we begin, take a look at what we're about to create!

As always, I'll be using my favourite stack:

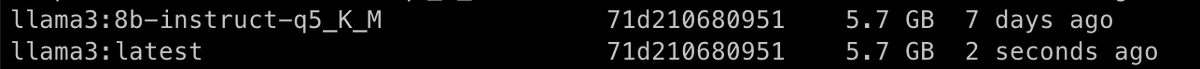

- @Ollama for locally serving a LLM (Llama-3)

- @Llama_Index for orchestration

- @Streamlit for building the UI

- Lightning AI ⚡️ for development & hosting

Let's go! 🚀